The NPDA has been managed by the Royal College of Paediatrics and Child Health (RCPCH) since 2011 and is commissioned by the Healthcare Quality Improvement Partnership, with funding from NHS England and the Welsh Government. Data are collected by all teams who manage children and young people with diabetes and uploaded onto the data collection platform at the RCPCH. All teams participated in 2016–17 and data quality is improving (Warner, 2018). Data are extracted and then analysed at national, regional, sustainability and transformation partnerships, clinical commissioning group and local level. This enables benchmarking against the nationally-required clinical standards in the care of young people with type 1 and type 2 diabetes outlined by the National Institute for Health and Care Excellence (2015).

The audit also provides incidence reporting for type 1 diabetes, and prevalence reporting for all other types of diabetes in children and young people, in addition to diabetes-related complications among those with type 1 or 2 diabetes. A total of 29,153 children and young people with diabetes were included in the 2016–17 audit, an increase of 714 since the 2015–16 audit (RCPCH, 2018a).

Data quality and local accountability

Data quality starts at grass-root level with local PDUs collecting data in their clinics. Although routine care is carried out in all clinics, the measurement and recording of data as part of such care is the indicator that it has truly occurred. All PDU members need a fundamental understanding of the local data collection tool and data items required to assist with the accurate measurement and recording of dataset items. The current data set can be viewed on the RCPCH website. PDUs should check their data prior to submission to the RCPCH data platform and take note of the returning data quality report so they can find missing data or correct errors. It is now possible to make regular uploads and check data collection progress via the web portal collection tool throughout the audit year, not just during the submission window.

Data are cleaned for analysis at the RCPCH and individualised summaries of results sent to PDU teams as early as possible before publication of the national report. This gives teams time to cross-check the reports with their own data to ensure they look as expected and to inform the RCPCH of any issues. The RCPCH is not responsible for the accuracy and completeness of the data submitted. This responsibility rests with the clinical teams/hospitals/sites/NHS Trust providing the service to patients. Issues with clinical audit data – whether case ascertainment, data completeness or data quality – must be addressed by the participating provider or Trust concerned.

Results and local variation

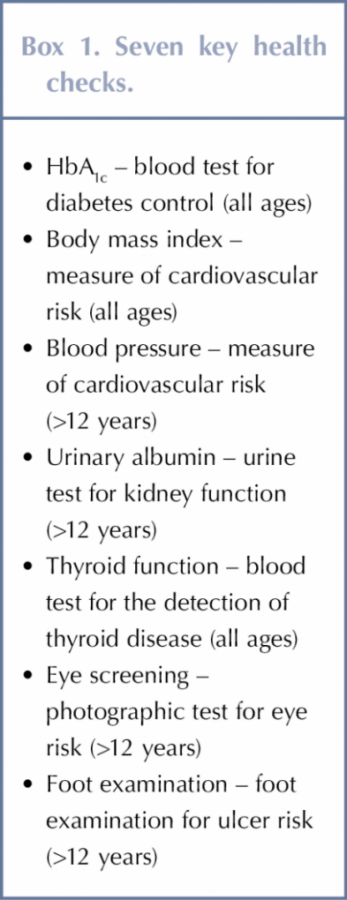

The proportion of young people aged 12 or older with type 1 diabetes recorded as receiving all seven key healthcare checks, see Box 1, varies considerably between units: national results showed an increase from 35.5% in 2015–16 to 43.5% in 2016–17, see Figure 1 (RCPCH, 2018a). There is considerable room for improvement, however, as some PDUs did not report a single patient who had received all recommended checks within the audit year.

The PDUs who had not submitted complete healthcare checks should have been aware of this at time of data submission. As all submissions have to be signed off by the lead clinician, there is an opportunity to search for missing data. If unable to rectify matters, opportunity exists at this point in time to make plans to rectify data quality issues before the next audit cycle. Identifying barriers to this annual provision and developing quality improvement initiatives will help mitigate these circumstances the in following year.

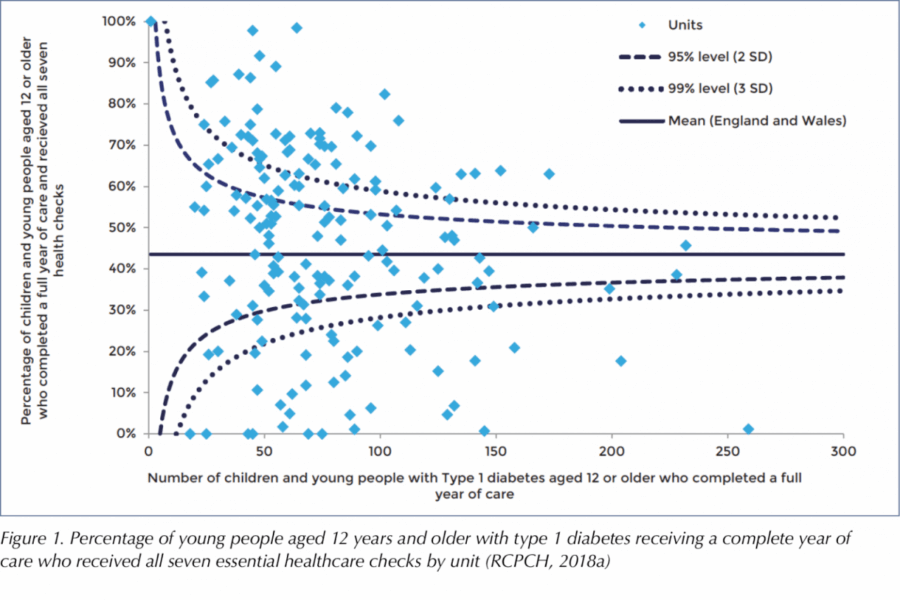

There was considerable variability in HbA1c target outcomes persists between PDUs even after case-mix adjustment, which allows for benchmarking between PDUs. The case-mix adjustments applied to the 2016–17 data considered the effect of age, sex, ethnicity, duration of diabetes and deprivation to produce an adjusted mean HbA1c for each PDU.

Older children and young people with type 1 diabetes had poorer HbA1c levels than younger children.

Given the variations in HbA1c associated with different demographic and social characteristics, it is appropriate to adjust HbA1c figures to take account of the characteristics of patients or case-mix when comparing the performance of individual PDUs. Adjusted percentages of children and young people within each PDU having a HbA1c lower than the treatment target of 58 mmol/mol (7.5%) (see Figure 2) or higher than the upper limit of 80 mmol/mol (12%) (see Figure 3) were calculated, taking account of these confounders, to show the likelihood of being above or below these targets at each PDU. The funnel plots in Figures 2 and 3 clearly demonstrate the massive variation between centres after adjustments.

Children and young people and their families rarely choose which team they wish to be seen by; it is a postcode lottery of where their GP sends them. Some areas have massive social deprivation and other barriers to engagement, but these adjusted figures enable benchmarking at a local and regional level. PDUs should reflect on local variation within their regional network and consider what good practice could be shared between units.

Outliers

The RCPCH has a comprehensive policy on the detection and management of outlier status for clinical indicators for managed national clinical audits (RCPCH, 2017b). This is based on the Department of Health’s guidance for management of outliers (Healthcare Quality Improvement Partnership, 2017). More than two standard deviations from the expected target is deemed an ‘alert’; more than three standard deviations is deemed an ‘alarm’. This gives weight to the audit, as the RCPCH has to notify the Care Quality Commission (CQC) and the Health Inspectorate Wales of any confirmed alarm level outliers identified by the audit, and notify the CQC and NHS Improvement of any healthcare provider organisations that fail to respond appropriately (as described by the guidance) to notification of such status. The clinical lead has the opportunity to respond to this alarm level outlier status prior to their Trust’s chief executive officer, medical director and the CQC being notified.

Responsibility to resolve the issues associated with outlier status lies locally. Those with outlier status should provide a copy of their action plan to the CQC (England) or the Health Inspectorate Wales (Wales). Outlier status helps complete the usual principles of the Plan-Do-Study-Act cycle (Agency for Healthcare Research and Quality, 2008), but all PDUs should create an action plan and regularly review their data as now indicated in the Children and Young people Diabetes Quality Programme measures (RCPCH, 2018b). It is now possible to use the online reporting tool to facilitate local review (RCPCH, 2017c).

Conclusion

The NPDA is a powerful tool that enables PDUs to benchmark the care they provide against nationally-agreed standards. Active participation in the audit should be a fundamental part of quality improvement and not just a task to be completed.

There are many opportunities to complete the audit action cycle prior to publication of the national report each year. Each PDU should take ownership of their data to help improve the measured outcomes for children and young people with diabetes.

NHSEI National Clinical Lead for Diabetes in Children and Young People, Fulya Mehta, outlines the areas of focus for improving paediatric diabetes care.

16 Nov 2022